Couchbase N1QL is a modern query processing engine designed to provide aggregate SQL for JSON by index on distributed data with a flexible data model. Modern databases are deployed on massive clusters. Using JSON provides a flexible data mode. N1QL supports enhanced group by index SQL for JSON to make query processing easier.

Applications and database drivers submit the N1QL query to one of the available Query nodes on a cluster. The Query node analyzes the query, uses metadata on underlying objects to figure out the optimal execution plan, which it then executes. During execution, depending on the query, using applicable indexes, query node works with index and data nodes to retrieve data and perform the planned operations. Because Couchbase is a modular clustered database, you scale out data, index, and query services to fit your performance and availability goals.

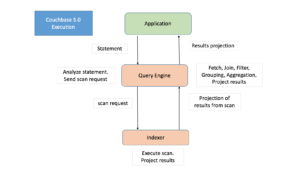

Prior to Couchbase 5.5, even when a query with GROUP BY and/or aggregates is covered by an index, the query fetched all relevant data from the indexer and performed grouping/aggregation of the data within the query engine.

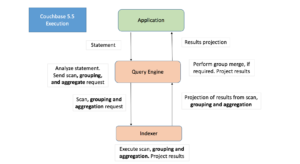

In Couchbase 5.5 query planner enhanced to intelligently requests the indexer to perform grouping and aggregation in addition to range scan for covering index. The Indexer has been enhanced to perform grouping, COUNT(), SUM(), MIN(), MAX(), AVG(), and related operations on-the-fly.

This requires no changes to the user query, but a good index design to cover the query and order the index keys is required. Not every query will benefit from this optimization, and not every index can accelerate every grouping and aggregation operation. Understanding the right patterns will help you design your indexes and queries. Index grouping and aggregation on global secondary index is supported with both storage engines: Standard GSI and Memory Optimized GSI (MOI). Index grouping and aggregation is supported in Enterprise Edition only.

This reduction step of performing the GROUP BY and Aggregation by the indexer reduces the amount of data transfer and disk I/O, resulting in:

- Improved query response time

- Improved resource utilization

- Low latency

- High scalability

- Low Total Cost of Ownership

Performance

The Index grouping and aggregations can improve query performance by orders of magnitude and reduce the latencies drastically. The following table list few sample query latency measurements.

Index :

|

1 |

CREATE INDEX idx_ts_type_country_city ON `travel-sample` (type, country, city); |

| Query | Description | 5.0 Latencies | 5.5 Latencies |

SELECT t.type, COUNT(type) AS cnt FROM travel-sample AS t WHERE t.type IS NOT NULL GROUP BY t.type; |

|

230ms | 13ms |

SELECT t.type, COUNT(1) AS cnt, COUNT(DISTINCT city) AS cntdcity FROM travel-sample AS t WHERE t.type IN [“hotel”,”airport”] GROUP BY t.type, t.country; |

|

40ms | 7ms |

SELECT t.country, COUNT(city) AS cnt FROM travel-sample AS t WHERE t.type = “airport” GROUP BY t.country; |

|

25ms | 3ms |

SELECT t.city, cnt FROM travel-sample AS t WHERE t.type IS NOT NULL GROUP BY t.city LETTING cnt = COUNT(city) HAVING cnt > 0 ; |

|

300ms | 160ms |

Index Grouping and Aggregation Overview

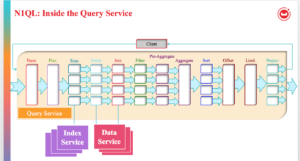

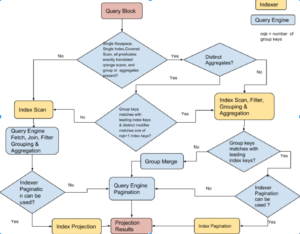

The above figure shows all the possible phases a SELECT query goes through to return the results. The filtering process takes the initial keyspace and produces an optimal subset of the documents the query is interested in. To produce the smallest possible subset, indexes are used to apply as many predicates as possible. Query predicate indicates the subset of the data interested. During the query planning phase, we select the indexes to be used. Then, for each index, we decide the predicates to be applied by each index. The query predicates are translated into range scans in the query plan and passed to Indexer.

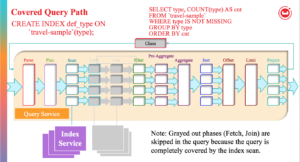

If the query doesn’t have JOINs and is covered by index, both Fetch and Join phases can be eliminated.

When all predicates are exactly translated to range scans Filter phase also can be eliminated. In that situation Scan and Aggregates are side by side, and since indexer has ability to do aggregation that phase can be done on indexer node. In some cases Sort, Offset, Limit phases can also be done indexer node.

The following flow chart describes how query planner decides to perform index aggregation for each query block of the query. If the index aggregation is not possible aggregations are done in query engine.

For example, let’s compare the previous vs. current performance of using GROUP BY and examine the EXPLAIN plan of the following query that uses an index defined on the Couchbase travel-sample bucket:

|

1 |

CREATE INDEX `def_type` ON `travel-sample`(`type`); |

Consider the query:

|

1 2 3 4 |

SELECT type, COUNT(type) FROM `travel-sample` WHERE type IS NOT MISSING GROUP BY type; |

Before Couchbase version 5.5, this query engine fetched relevant data from the indexer and grouping and aggregation of the data is done within query engine. This simple query takes about 250 ms.

Now, in Couchbase version 5.5, this query use the same def_type index, but executes in under 20 ms. In the explain below, you can see fewer steps and the lack of the grouping step after the index scan because the index scan step does the grouping and aggregation as well.

As the data and query complexity grows, the performance benefit (both latency and throughput) will grow as well.

Understanding EXPLAIN of Index Grouping and Aggregation

Looking at the explain of the query:

|

1 |

EXPLAIN SELECT type, COUNT(type) FROM `travel-sample` WHERE type IS NOT MISSING GROUP BY type;{ |

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 |

{ "plan": { "#operator": "Sequence", "~children": [ { "#operator": "IndexScan3", "covers": [ "cover ((`travel-sample`.`type`))", "cover ((meta(`travel-sample`).`id`))", "cover (count(cover ((`travel-sample`.`type`))))" ], "index": "def_type", "index_group_aggs": { "aggregates": [ { "aggregate": "COUNT", "depends": [ 0 ], "expr": "cover ((`travel-sample`.`type`))", "id": 2, "keypos": 0 } ], "depends": [ 0 ], "group": [ { "depends": [ 0 ], "expr": "cover ((`travel-sample`.`type`))", "id": 0, "keypos": 0 } ] }, "index_id": "b948c92b44c2739f", "index_projection": { "entry_keys": [ 0, 2 ] }, "keyspace": "travel-sample", "namespace": "default", "spans": [ { "exact": true, "range": [ { "inclusion": 1, "low": "null" } ] } ], "using": "gsi" }, { "#operator": "Parallel", "~child": { "#operator": "Sequence", "~children": [ { "#operator": "InitialProject", "result_terms": [ { "expr": "cover ((`travel-sample`.`type`))" }, { "expr": "cover (count(cover ((`travel-sample`.`type`))))" } ] }, { "#operator": "FinalProject" } ] } } ] }, "text": "SELECT type, COUNT(type) FROM `travel-sample` WHERE type IS NOT MISSING GROUP BY type;" } |

You will see “index_group_aggs” in the IndexScan section (i.e “#operator”: “IndexScan3”). If “index_group_aggs” is MISSING then query service is performing grouping and aggregation. If present query is using Index grouping and aggregation and it has all relevant information indexer required for grouping and aggregation. The following table describe how to interpret the various information of index_group_aggs object.

| Field Name | Description | Line numbers from Example | Explain Text in Example |

| aggregates | Array of Aggregate objects, and each object represents one aggregate. The absence of this item means only group by is present in the query. | 14-24 | aggregates |

| aggregate | Aggregate operation (MAX/MIN/SUM/COUNT/COUNTN). | 16 | COUNT |

| distinct | Aggregate modifier is DISTINCT | – | False(When true only it appears) |

| depends | List of index key positions(starting with 0) the aggregate expression depends on. | 17-19 | 0 (because type is 0th index key of def_type index) |

| expr | aggregate expression | 20 | cover ((travel-sample.type)) |

| id | Unique ID given internally and will be used in index_projection | 21 | 2 |

| keypos | Indicator to that tells use expression at the index key position or from the expr field.

|

22 | 0 (because type is 0th index key of def_type index) |

| depends | List of index key positions the groups/aggregates expressions depends on (consolidated list) | 25-27 | 0 |

| group | Array of GROUP BY objects, and each object represents one group key. The absence of this item means there is no GROUP BY clause present in the query. | 28-37 | group |

| depends | List of index key positions(starting with 0) the group expression depends on. | 30-32 | 0

(because type is 0th key of index key of def_type index) |

| expr | group expression. | 33 | cover ((travel-sample.type)) |

| id | Unique ID given internally and will be used in index_projection. | 34 | 0 |

| keypos | Indicator to that tells use expression at the index key position or from the expr field.

|

35 | 0 (because type is 0th index key of def_type index) |

The covers field is array and it has all the index keys, document key(META().id), group keys expressions that are not exactly matched with index keys (sorted by id), aggregates sorted by id. Also “Index_projection” will have all the group/aggregate ids.

|

1 2 3 4 5 |

"covers": [ "cover ((`travel-sample`.`type`))", ← Index key (0) "cover ((meta(`travel-sample`).`id`))", ← document key (1) "cover (count(cover ((`travel-sample`.`type`))))" ← aggregate (2) ] |

In above case group expression type is same Index key of index def_type. It is not included twice.

Details of Index Grouping and Aggregation

We will use examples to show how Index grouping and aggregations works. To follow the examples please create a bucket “default” and insert the following documents:

|

1 2 3 4 5 6 7 8 9 |

INSERT INTO default (KEY,VALUE) VALUES ("ga0001", {"c0":1, "c1":10, "c2":100, "c3":1000, "c4":10000, "a1":[{"id":1}, {"id":1}, {"id":2}, {"id":3}, {"id":4}, {"id":5}]}), VALUES ("ga0002", {"c0":1, "c1":20, "c2":200, "c3":2000, "c4":20000, "a1":[{"id":1}, {"id":1}, {"id":2}, {"id":3}, {"id":4}, {"id":5}]}), VALUES ("ga0003", {"c0":1, "c1":10, "c2":300, "c3":3000, "c4":30000, "a1":[{"id":1}, {"id":1}, {"id":2}, {"id":3}, {"id":4}, {"id":5}]}), VALUES ("ga0004", {"c0":1, "c1":20, "c2":400, "c3":4000, "c4":40000, "a1":[{"id":1}, {"id":1}, {"id":2}, {"id":3}, {"id":4}, {"id":5}]}), VALUES ("ga0005", {"c0":2, "c1":10, "c2":100, "c3":5000, "c4":50000, "a1":[{"id":1}, {"id":1}, {"id":2}, {"id":3}, {"id":4}, {"id":5}]}), VALUES ("ga0006", {"c0":2, "c1":20, "c2":200, "c3":6000, "c4":60000, "a1":[{"id":1}, {"id":1}, {"id":2}, {"id":3}, {"id":4}, {"id":5}]}), VALUES ("ga0007", {"c0":2, "c1":10, "c2":300, "c3":7000, "c4":70000, "a1":[{"id":1}, {"id":1}, {"id":2}, {"id":3}, {"id":4}, {"id":5}]}), VALUES ("ga0008", {"c0":2, "c1":20, "c2":400, "c3":8000, "c4":80000, "a1":[{"id":1}, {"id":1}, {"id":2}, {"id":3}, {"id":4}, {"id":5}]}); |

Example 1: Group by leading index keys

Let consider the following query and index:

|

1 2 3 4 5 6 7 8 |

SELECT d.c0 AS c0, d.c1 AS c1, SUM(d.c3) AS sumc3, AVG(d.c4) AS avgc4, COUNT(DISTINCT d.c2) AS dcountc2 FROM default AS d WHERE d.c0 > 0 GROUP BY d.c0, d.c1 ORDER BY d.c0, d.c1 OFFSET 1 LIMIT 2; |

Required Index:

|

1 |

CREATE INDEX idx1 ON default(c0, c1, c2, c3, c4); |

The query has GROUP BY and multiple aggregates, some of aggregates has DISTINCT modifier. The query can be covered by index idx1 and the predicate (d.c0 > 0) can be converted into exact range scan and passed it to index scan. So, the index and query combination qualifies Index grouping and aggregations.

Indexes are naturally ordered and grouped by the order of the index key definition. In the above query, the GROUP BY keys (d.c0, d.c1) exactly matches with the leading keys (c0, c1) of the index. Therefore, index has each group data together, indexer will produce one row per group i.e. Full aggregation. Also, query has aggregate that has DISTINCT modifier and it exactly matches with one of the index keys with position less than or equal to number of group keys plus one (i.e. there 2 group keys, DISTINCT modifier can be any one of index key at position 0,1,2 because index key followed by group keys and DISTINCT modifier can applied without sort). Therefore, the query above is suitable for indexer to handle grouping and aggregation.

If group by missing one of the leading index key and there is equality predicate, then special optimization is done by treating the index key implicitly present in group keys and determine if Full aggregation is possible or not. For partition index the all the partition keys needs to present in the group keys to generate Full aggregations.

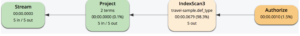

The above graphical execution tree shows index scan (IndexScan3) performing scan and index grouping aggregations. The results from the index scan are projected.

Let’s look at the text based explain :

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 |

{ "plan": { "#operator": "Sequence", "~children": [ { "#operator": "Sequence", "~children": [ { "#operator": "IndexScan3", "as": "d", "covers": [ "cover ((`d`.`c0`))", "cover ((`d`.`c1`))", "cover ((`d`.`c2`))", "cover ((`d`.`c3`))", "cover ((`d`.`c4`))", "cover ((meta(`d`).`id`))", "cover (count(distinct cover ((`d`.`c2`))))", "cover (countn(cover ((`d`.`c4`))))", "cover (sum(cover ((`d`.`c3`))))", "cover (sum(cover ((`d`.`c4`))))" ], "index": "idx1", "index_group_aggs": { "aggregates": [ { "aggregate": "COUNT", "depends": [ 2 ], "distinct": true, "expr": "cover ((`d`.`c2`))", "id": 6, "keypos": 2 }, { "aggregate": "COUNTN", "depends": [ 4 ], "expr": "cover ((`d`.`c4`))", "id": 7, "keypos": 4 }, { "aggregate": "SUM", "depends": [ 3 ], "expr": "cover ((`d`.`c3`))", "id": 8, "keypos": 3 }, { "aggregate": "SUM", "depends": [ 4 ], "expr": "cover ((`d`.`c4`))", "id": 9, "keypos": 4 } ], "depends": [ 0, 1, 2, 3, 4 ], "group": [ { "depends": [ 0 ], "expr": "cover ((`d`.`c0`))", "id": 0, "keypos": 0 }, { "depends": [ 1 ], "expr": "cover ((`d`.`c1`))", "id": 1, "keypos": 1 } ] }, "index_id": "d06df7c5d379cd5", "index_order": [ { "keypos": 0 }, { "keypos": 1 } ], "index_projection": { "entry_keys": [ 0, 1, 6, 7, 8, 9 ] }, "keyspace": "default", "limit": "2", "namespace": "default", "offset": "1", "spans": [ { "exact": true, "range": [ { "inclusion": 0, "low": "0" } ] } ], "using": "gsi" }, { "#operator": "Parallel", "maxParallelism": 1, "~child": { "#operator": "Sequence", "~children": [ { "#operator": "InitialProject", "result_terms": [ { "as": "c0", "expr": "cover ((`d`.`c0`))" }, { "as": "c1", "expr": "cover ((`d`.`c1`))" }, { "as": "sumc3", "expr": "cover (sum(cover ((`d`.`c3`))))" }, { "as": "avgc4", "expr": "(cover (sum(cover ((`d`.`c4`)))) / cover (countn(cover ((`d`.`c4`)))))" }, { "as": "dcountc2", "expr": "cover (count(distinct cover ((`d`.`c2`))))" } ] }, { "#operator": "FinalProject" } ] } } ] }, { "#operator": "Limit", "expr": "2" } ] }, "text": "SELECT d.c0 AS c0, d.c1 AS c1, SUM(d.c3) AS sumc3, AVG(d.c4) AS avgc4, COUNT(DISTINCT d.c2) AS dcountc2 FROM default AS d\nWHERE d.c0 > 0 GROUP BY d.c0, d.c1 ORDER BY d.c0, d.c1 OFFSET 1 LIMIT 2;" } |

- The “index_group_aggs” (lines 24-89) in the IndexScan section (i.e “#operator”: “IndexScan3”) shows query using index grouping and aggregations.

- If query uses index grouping and aggregation the predicates are exactly converted to range scans and passed to index scan as part of spans, so there will not be any Filter operator in the explain.

- As group by keys exactly match the leading index keys, indexer will produce full aggregations. Therefore, we also eliminate grouping in query service (There is no InitialGroup, IntermediateGroup, FinalGroup operators in the explain).

- Indexer projects “index_projection” (lines 99-107) including all group keys and aggregates.

- Query ORDER BY matches with leading index keys and GROUP BY is on leading index keys we can use index order. This can be found in explain (lines 91-98) and will not use “#operator”: “Order” between line 164-165.

- As query can use index order and there is no HAVING clause in the query the “offset” and “limit” values can be passed to indexer.

- This can be found at line 112, 110. The “offset” can be applied only once you will not see “#operator”: “Offset” between line 164-165, But re-applying “limit” is no-op. This can be seen at line 165-168.

- Query contains AVG(x) it has been rewritten as SUM(x)/COUNTN(x). The COUNTN(x) only counts when x is numeric value.

Example 2: Group by leading index keys, LETTING, HAVING

Let consider the following query and index:

|

1 2 3 4 5 6 7 8 9 10 |

SELECT d.c0 AS c0, d.c1 AS c1, sumc3 AS sumc3, AVG(d.c4) AS avgc4, COUNT(DISTINCT d.c2) AS dcountc2 FROM default AS d WHERE d.c0 > 0 GROUP BY d.c0, d.c1 LETTING sumc3 = SUM(d.c3) HAVING sumc3 > 0 ORDER BY d.c0, d.c1 OFFSET 1 LIMIT 2; |

Required Index:

|

1 |

CREATE INDEX idx1 ON default(c0, c1, c2, c3, c4); |

The above query is similar to Example 1 but it has LETTING, HAVING clause. Indexer will not be able to handle these and thus LETTING and HAVING clauses are applied in query service after grouping and aggregations. Therefore you see Let, Filter operators after IndexScan3 in execution tree. Having clause is filter and further eliminates items thus “offset”, “limit” can’t be pushed to indexer and need to be applied in query service, but we still can use index order.

Example 3: Group by non-leading index keys

Let consider the following query and index:

|

1 2 3 4 5 6 7 8 |

SELECT d.c1 AS c1, d.c2 AS c2, SUM(d.c3) AS sumc3, AVG(d.c4) AS avgc4, COUNT(d.c2) AS countc2 FROM default AS d WHERE d.c0 > 0 GROUP BY d.c1, d.c2 ORDER BY d.c1, d.c2 OFFSET 1 LIMIT 2; |

Required Index:

|

1 |

CREATE INDEX idx1 ON default(c0, c1, c2, c3, c4); |

The query has GROUP BY and multiple aggregates. The query can be covered by index idx1 and the predicate (d.c0 > 0) can be converted into exact range scan and passed it to index scan. So, the index and query combination qualifies Index grouping and aggregations.

In the above query, the GROUP BY keys (d.c1, d.c2) do NOT match the leading keys (c0, c1) of the index. The groups are scattered across the index. Therefore, indexer will produce multiple rows per each group i.e. Partial aggregation. In case of partial aggregation query service does group merge, query can’t use index order or push “offset”, “limit” to indexer. In case of partial aggregation if any aggregate has DISTINCT modifier index grouping and aggregation is not possible. The query above is suitable for indexer to handle grouping and aggregation.

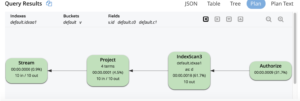

The above graphical execution tree shows index scan (IndexScan3) performing scan and index grouping aggregations. The results from the index scan are grouped again and projected.

Let’s look at the text based explain :

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 |

{ "plan": { "#operator": "Sequence", "~children": [ { "#operator": "Sequence", "~children": [ { "#operator": "IndexScan3", "as": "d", "covers": [ "cover ((`d`.`c0`))", "cover ((`d`.`c1`))", "cover ((`d`.`c2`))", "cover ((`d`.`c3`))", "cover ((`d`.`c4`))", "cover ((meta(`d`).`id`))", "cover (count(cover ((`d`.`c2`))))", "cover (countn(cover ((`d`.`c4`))))", "cover (sum(cover ((`d`.`c3`))))", "cover (sum(cover ((`d`.`c4`))))" ], "index": "idx1", "index_group_aggs": { "aggregates": [ { "aggregate": "COUNT", "depends": [ 2 ], "expr": "cover ((`d`.`c2`))", "id": 6, "keypos": 2 }, { "aggregate": "COUNTN", "depends": [ 4 ], "expr": "cover ((`d`.`c4`))", "id": 7, "keypos": 4 }, { "aggregate": "SUM", "depends": [ 3 ], "expr": "cover ((`d`.`c3`))", "id": 8, "keypos": 3 }, { "aggregate": "SUM", "depends": [ 4 ], "expr": "cover ((`d`.`c4`))", "id": 9, "keypos": 4 } ], "depends": [ 1, 2, 3, 4 ], "group": [ { "depends": [ 1 ], "expr": "cover ((`d`.`c1`))", "id": 1, "keypos": 1 }, { "depends": [ 2 ], "expr": "cover ((`d`.`c2`))", "id": 2, "keypos": 2 } ], "partial": true }, "index_id": "d06df7c5d379cd5", "index_projection": { "entry_keys": [ 1, 2, 6, 7, 8, 9 ] }, "keyspace": "default", "namespace": "default", "spans": [ { "exact": true, "range": [ { "inclusion": 0, "low": "0" } ] } ], "using": "gsi" }, { "#operator": "Parallel", "~child": { "#operator": "Sequence", "~children": [ { "#operator": "InitialGroup", "aggregates": [ "sum(cover (count(cover ((`d`.`c2`)))))", "sum(cover (countn(cover ((`d`.`c4`)))))", "sum(cover (sum(cover ((`d`.`c3`)))))", "sum(cover (sum(cover ((`d`.`c4`)))))" ], "group_keys": [ "cover ((`d`.`c1`))", "cover ((`d`.`c2`))" ] } ] } }, { "#operator": "IntermediateGroup", "aggregates": [ "sum(cover (count(cover ((`d`.`c2`)))))", "sum(cover (countn(cover ((`d`.`c4`)))))", "sum(cover (sum(cover ((`d`.`c3`)))))", "sum(cover (sum(cover ((`d`.`c4`)))))" ], "group_keys": [ "cover ((`d`.`c1`))", "cover ((`d`.`c2`))" ] }, { "#operator": "FinalGroup", "aggregates": [ "sum(cover (count(cover ((`d`.`c2`)))))", "sum(cover (countn(cover ((`d`.`c4`)))))", "sum(cover (sum(cover ((`d`.`c3`)))))", "sum(cover (sum(cover ((`d`.`c4`)))))" ], "group_keys": [ "cover ((`d`.`c1`))", "cover ((`d`.`c2`))" ] }, { "#operator": "Parallel", "~child": { "#operator": "Sequence", "~children": [ { "#operator": "InitialProject", "result_terms": [ { "as": "c1", "expr": "cover ((`d`.`c1`))" }, { "as": "c2", "expr": "cover ((`d`.`c2`))" }, { "as": "sumc3", "expr": "sum(cover (sum(cover ((`d`.`c3`)))))" }, { "as": "avgc4", "expr": "(sum(cover (sum(cover ((`d`.`c4`))))) / sum(cover (countn(cover ((`d`.`c4`))))))" }, { "as": "countc2", "expr": "sum(cover (count(cover ((`d`.`c2`)))))" } ] } ] } } ] }, { "#operator": "Order", "limit": "2", "offset": "1", "sort_terms": [ { "expr": "cover ((`d`.`c1`))" }, { "expr": "cover ((`d`.`c2`))" } ] }, { "#operator": "Offset", "expr": "1" }, { "#operator": "Limit", "expr": "2" }, { "#operator": "FinalProject" } ] }, "text": "SELECT d.c1 AS c1, d.c2 AS c2, SUM(d.c3) AS sumc3, AVG(d.c4) AS avgc4, COUNT(d.c2) AS countc2 FROM default AS d WHERE d.c0 > 0 GROUP BY d.c1, d.c2 ORDER BY d.c1, d.c2 OFFSET 1 LIMIT 2;" } |

- The “index_group_aggs” (lines 24-88) in the IndexScan section (i.e “#operator”: “IndexScan3”) shows query using index grouping and aggregations.

- If query uses index grouping and aggregation the predicates are exactly converted to range scans and passed to index scan as part of spans, so there will not be any Filter operator in the explain.

- As group by keys did NOT match the leading index keys, indexer will produce partial aggregations. This can be seen as “partial”:true inside “index_group_aggs” at line 87. Query service does Group merging (see line 119-161)

- Indexer projects “index_projection” (lines 91-99) containing group keys and aggregates.

- If the Indexer generates partial aggregations query can’t use index order and requires explicit sort, and “offset”, “limit” can’t be pushed to indexer. The plan will have explicit “Order”, “Offset”, and “Limit” operators (line 197 – 217)

- Query contains AVG(x) which has been rewritten as SUM(x)/COUNTN(x). The COUNTN(x) only counts when x is numeric value.

- During Group merge

- MIN becomes MIN of MIN

- MAX becomes MAX of MAX

- SUM becomes SUM of SUM

- COUNT becomes SUM of COUNT

- CONTN becomes SUM of COUNTN

- AVG becomes SUM of SUM divided by SUM of COUNTN

Example 4: Group and Aggregation with array index

Let consider the following query and index:

|

1 2 3 4 5 6 7 8 |

SELECT d.c0 AS c0, d.c1 AS c1, SUM(d.c3) AS sumc3, AVG(d.c4) AS avgc4, COUNT(DISTINCT d.c2) AS dcountc2 FROM default AS d WHERE d.c0 > 0 AND d.c1 >= 10 AND ANY v IN d.a1 SATISFIES v.id = 3 END GROUP BY d.c0, d.c1 ORDER BY d.c0, d.c1 OFFSET 1 LIMIT 2; |

Required Index:

|

1 |

CREATE INDEX idxad1 ON default(c0, c1, DISTINCT ARRAY v.id FOR v IN a1 END, c2, c3, c4); |

The query has GROUP BY and multiple aggregates, some of aggregates has DISTINCT modifier. The query predicate has ANY clause and query can be covered by array index index idxad1. The predicate (d.c0 > 0 AND d,c11 >= 10 AND ANY v IN d.a1 SATISFIES v.id = 3 END ) can be converted into exact range scans and passed to index scan. For array index Indexer maintain separate element for each array index key, in order to use index group and aggregation the SATISFIES predicate must have a single equality predicate and the array index key must have DISTINCT modifier. Therefore index and query combination is suitable to handle Index grouping and aggregations.

This example is similar to example 1 except it uses an array index. The above graphical execution tree shows index scan (IndexScan3) performing scan, index grouping aggregations, order, offset and limit. The results from the index scan are projected.

Example 5: Group and Aggregation of UNNEST Operation

Let consider the following query and index:

|

1 2 3 4 5 |

SELECT v.id AS id, d.c0 AS c0, SUM(v.id) AS sumid, AVG(d.c1) AS avgc1 FROM default AS d UNNEST d.a1 AS v WHERE v.id > 0 GROUP BY v.id, d.c0; |

Required Index:

|

1 |

CREATE INDEX idxaa1 ON default(ALL ARRAY v.id FOR v IN a1 END, c0, c1); |

The query has GROUP BY and multiple aggregates. The query has UNNEST on array d.a1 and have predicate on the array key (v.id > 0). The index idxaa1 qualifies query (For Unnest to use Array index for Index scan the array index must be leading key and array variable in the index definition must match with UNNEST alias). The predicate (v.id > 0) can be converted into exact range scans and passed to index scan. Therefore index and query combination is suitable to handle Index grouping and aggregations.

The above graphical execution tree shows index scan (IndexScan3) performing scan, index grouping aggregations. The results from the index scan are projected. The UNNEST is special type of JOIN between parent and each array element. Therefore, the UNNEST repeats the parent document fields (d.c0, d.c1) and the d.c0, dc.1 reference would have duplicates compared to the original d documents (Need to aware this while using in SUM(), AVG()).

Rules for Index Grouping and Aggregation

The Index grouping and aggregation are per query block, and decision on whether or not use index grouping/aggregation is made only after index selection process.

- Query block should not contain Joins, NEST, SUBqueries.

- Query block must be covered by singline index.

- Query block should not contain ARRAY_AGG()

- Query block can’t be correlated

- All the predicates must be exactly translated into range scans.

- GROUP BY, Aggregate expressions can’t reference any subquires, named parameters, positional parameters.

- GROUP BY keys, aggregate expressions can be index keys, document key, expression on index keys, or expression on document key

- Index needs to be able to do grouping and aggregation on all the aggregates in query block otherwise no index aggregation. (i.e. ALL or None)

- Aggregate contain DISTINCT modifier

- The group keys must exactly match with leading index keys (if the query contains equality predicate on the index key, then it assumes this index key is implicitly included in GROUP keys if not already present).

- The aggregate expression must be on one of the n+1 leading index keys (n represent number of group keys).

- In case of partition index the partition keys must exactly match with group keys.

Summary

When you analyze the explain plan, correlate the predicates in the explain to the spans and make sure all the predicate exactly translated to range scans and query is covered. Ensure query using index grouping and aggregations, and if possible query using full aggregations from indexer by adjusting index keys for better performance.