Choosing the right fit – Immediate or Eventual Persistence?

With the emergence of NoSql databases, “eventual persistence” is an option available to accelerate reads and writes to the database. Persistence, also popularly known as durability to disk, has long been recognized and cherished as one of the desirable characteristics of an ACID (Atomicity, Consistency, Isolation and Durability) compliant database system.

When I first heard of eventual persistence it did rattle my strong relational database mindset where durability is like a religious belief.

Over time I have come to realize not all applications need immediate persistence especially when the trade-off is performance and cost because reads from and writes to disk are slow. The advantages of eventual persistence sometimes greatly overweigh immediate persistence.

It is time to exorcise the fear of eventual persistence out of the database users!

Immediate and Eventual

persistence – So what is the

difference?

Simply put eventual persistence means every time you write data into the database it appears as if the row has been successfully written to disk because the write is acknowledged by the database system. The host triggering this write gets an acknowledgement back from database stating that the write is successful, however under the covers the database has in actuality not written the data to disk but has written it to an intermediate layer like memory or a file system cache. The actual write to disk is queued up and happens asynchronously.

Immediate persistence means the write to disk is synchronous and the write is acknowledged only after the data is written to disk.

A few seconds after this is mentioned to 3NFers (my name for relational database users ), the meeting ends very rapidly or database solutions that provide eventual persistence are dismissed as unviable solutions. Acknowledgement of writes after they are persisted to disk has been the only way.

This blog examines if certain workloads can function with a deferred write to disk in favor of performance and cost IF the initial write is to a dependable, fully redundant fail-safe layer with several layers of high availability options.

Factors to consider when

choosing the right persistence

option

I think we can in many, many scenarios get by with eventual persistence in favor of performance and cost. Let us examine it.

Before I delve any deeper into this, let me categorically state that the durability is a great feature but at the cost of performance and higher CAPEX/OPEX. Higher CAPEX/OPEX because there will be a need to invest in faster storage in order to deliver better performance. If you can get by because you have other redundancy features built into your product and performance is a huge consideration, then you have to consider eventual persistence as a possible solution

Let us get back to the basics for a second and examine what an application user really wants from their interaction with a database

- Write data quickly

- Read the data I have written every time with consistent answers

- Never lose what I have written. What this actually means is minimize data loss. Observe that I use the word minimize because there are several scenarios where even the “iron-clad” guarantee of RDBMS databases can fall apart.

RDBMS databases have been around for a long, long time. They have certainly minimized and addressed these errors. It makes a whole lot of sense for very sensitive workloads that could result in lost opportunities caused by inconsistent results. My point is that it is an all-or-nothing solution. For applications that really don’t need this kind of durability the trade-off over performance proves to be an expensive one.

What if your database solution lets you write data quickly, consistently read what you have read, never lose what you have written, provide transaction isolation without sacrificing speed and performance at a fraction of the cost of what you currently have on the floor? Would that resonate with you? I believe so.

What if your database solution is

- Memory-centric which lets you write data very fast.

- Keeps your data in memory, which enables you to read from memory very fast, essentially letting you consistently read what you have written.

- Has built HA features which lets you have copies of data in a rack-aware, datacenter-aware implementation, so that if a node goes down before a write could be persisted to disk, you could always rely on other nodes or another cluster to pick up slack.

The advantages of this architecture is that

- Applications that can tolerate some very, very rare cases of data being dropped can still function because there are several levels of redundancy built in.

- Speed and performance are not sacrificed.

- Cost of the solution is low.

Can we ensure there is never any

data loss in a system be it

relational or NoSQL systems ?

The answer is actually no because you can only minimize the numbers of errors, not completely eliminate them. While many will argue that writing to disk is the safest way to protect data, having come from this world, I have seen and repaired several scenarios where data was lost due to bad drives, data corruption, failed ETL jobs, etc. Existing solutions do not necessarily provide a 100% guarantee that your data is safe. The point is we have to consider the various dimensions involved and the trade-offs we have to make.

If you choose eventual persistence, then it is imperative to ensure

1) Built in inter-cluster high availability features to compensate for data loss caused by not being able to persist data.

2) Several layers of redundancy either via cross cluster HA features or replication to alternate data centers

3) Robust QA processes that can quickly detect and repair erroneous or lost data?

4) Performance, speed and cost paramount are paramount to your business?

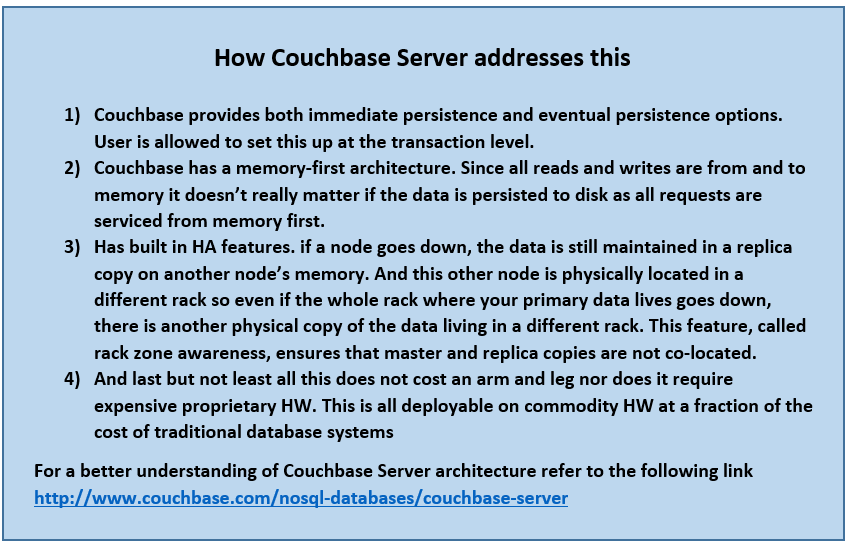

Couchbase Server provides you the traditional durability functionality with the push button scale-up and scale-out features, blazing performance at a fraction of the cost of traditional database solutions.

Conclusion

Durability is desirable but depending on the latency requirements, the application profile and expense one is willing to incur we could get by with eventual persistence. If your database solution has the following features

- Has built in redundancy and multiple levels of High availability features that minimizes data loss

- Can provide blazing performance

- At a fraction of the cost of the solution you currently have

Then eventual persistence is a more than a viable solution.

The chances that an application will experience data loss in a fully durable system is almost as much as it would be in an eventually persistent system with the additional overhead of cost, performance and scalability. In that case isn’t durability over-rated?

_____________________________________________________________________________________

This article has been written by Sandhya Krishnamurthy, Senior Solutions Engineer at Couchbase, a leading provider of NoSql databases.

Contact the author at sandhya.krishnamurthy@couchbase.com

- Talk to us at Forums

- Follow us https://twitter.com/couchbasedev and https://twitter.com/couchbase

Visit the sites below to learn more about Couchbase products, for free product downloads and free training